CSVSource is a command line tool written in Rust to convert a CSV file to something else, including SQL. It has special features like grouping insert statements in transaction chunks and inserting multiple rows with a single insert statement.

1. Why CSVSource

Using cloud providers to run our business has been a game changer. They allow us to rapidly provision all the resources to ensure high availability, security, and performance. In many cases, They also manage resources for us, considerably reducing complexity and saving time. However, the more control we delegate to them the less autonomy we have over those resources. This is a good thing because it pushes towards the adoption of good practices, but it can also be challenging sometimes.

Take the provision of a managed PostgreSQL Server on Azure, for example. Azure ensures it is optimally configured for our needs, but it is quite limited when it comes to seeding the database using a CSV file. This is relatively easy doing with direct access to the server, but not possible to do in a managed instance.

To solve this problem to all platforms, all cloud providers, and all SQL databases, we have developed a simple tool capable of converting a CSV file into a SQL file, where rows turn into insert statements. All you need after using CSVSource is a mean to execute the generated SQL script.

2. Using CSVSource

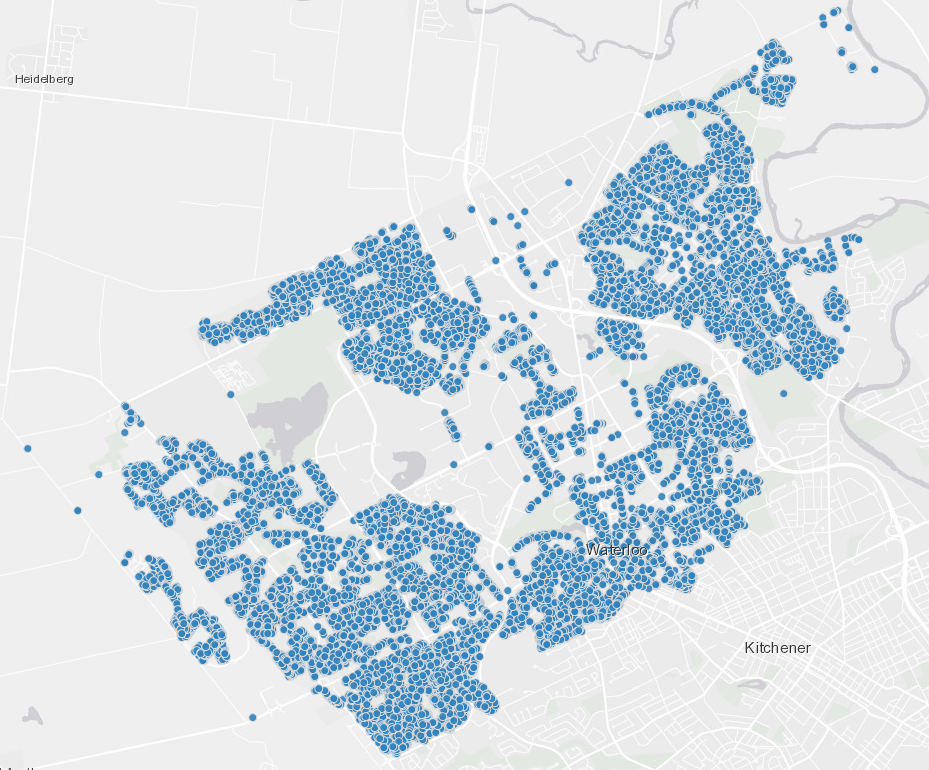

The City of Waterloo, located in Ontario, Canadá, has an Open Data Portal. It publishes raw data about infrastructure, services, environment, transportation, etc. Residents can use the data to oversee public investments and services, identify gaps, discover development opportunities, and even create new business. For us, this portal is useful to test CSVSource. There is an interesting dataset we want to start with: an inventory of every single tree planted in the streets of Waterloo.

This dataset is available in the folder /examples. We can process it with the following command:

$ csvsource --source waterloo_tree_inventory.csv

which generates the sql file waterloo_tree_inventory.sql. This is what happens by default:

-

the name of the CSV file is used as the name of the table in the insert statement and the name of the SQL file.

-

the first line is skipped because it contains the headers that describe the data.

-

the headers in the first line are used as columns of the table.

-

the column separator is comma.

-

each line in the CSV turns into an insert statement.

-

all values are quoted as string.

2.1. Supported Command Line Arguments

These default behaviours can change using arguments. To specify a custom SQL filename, different from the CSV filename, use the argument --target or g:

$ csvsource --source waterloo_tree_inventory.csv --target path/to/waterloo_trees.sql

To set a table name different from the CSV filename, use:

$ ./csvsource --source waterloo_tree_inventory.csv --table TREE_INVENTORY

If the first line contains data instead of headers then indicate it using --headers or -h:

--headers false

In case of --headers false, the columns must be informed manually, using multiple --column or -c:

-h false -c coord_x -c coord_y -c street -c species ...

Columns can optionally be informed with -h true, since the user may want to customize the columns in the insert statements.

If the file is using tab or semicolon characters as value delimiters instead of comma, then specify it with --delimiter or -d:

--delimiter tab

If required, the insert statements can be in a transaction scope, beginning with begin transaction and ending with commit. Use the argument --with_transaction or -w:

--with_transaction true

To enable automatic type recognition, use the argument --typed or y:

--typed true

If you need to put some SQL statements or documentation before the generated statements, at the beginning of the file, use the argument --prefix or -p:

--prefix waterloo_tree_inventory_prefix.txt

It is also possible to put content at the end of the file, using the argument --suffix or -s:

--suffix waterloo_tree_inventory_suffix.txt

Prefix and suffix are actually template files and support variables that can be replaced by values at runtime. At this point in time, only one variable is supported:

-

table: the name of the csv file or the value of the argument--table.

Sometimes the CSV file is too large and generates too many insert statements to the point a single database transaction cannot cope. To create several transactions throughout the file, use --chunk or -k:

--chunk 10

It puts chunks of 1000 inserts between begin transaction and commit. The insert statements can be further optimized, inserting several records at once by using --chunkinsert or -i:

--chunkinsert 10

A proper configuration of --chunk and chunkinsert can optimize the SQL file maximum performance. In this case, a CSV file with 1000 records would be converted into a SQL with 10 transactions and each transaction would contain 10 inserts with 10 records each.

2.1.1. Argument Summary

The following table summarizes all supported arguments, also accessible through: csvsource --help.

| Argument | Short | Description | Options | Default |

|---|---|---|---|---|

|

|

Relative or absolute path to the CSV file. The file’s name is also used as table name and target file’s name, unless specified otherwise by the arguments |

NA |

Mandatory |

|

|

Relative or absolute path to the target file. |

NA |

Name of the CSV file with the target extension instead. |

|

|

The supported CSV value delimiter used in the file. |

comma, semicolon, tab |

comma |

|

|

Database table name if it is different from the name of the CSV file. |

NA |

Name of the CSV file |

|

|

Consider the first line in the file as headers to columns. They are also used as sql column names unless specified otherwise. |

true, false |

true |

|

|

Columns of the database table if different from the name of the labels. |

NA |

CSV headers. Required if |

|

|

Indicates whether SQL statements are put in a transaction block or not. This argument is ignored if the argument chunk is used. |

true, false |

false |

|

|

Indicates whether the values type are declared, automatically detected or everything is taken as string. |

true, false |

false |

|

|

Size of the transaction chunk, indicating how many insert statements are put within a transaction scope. |

NA |

0 |

|

|

Size of the insert chunk, indicating how many lines of the CSV files are put in a single insert statement. |

NA |

0 |

|

|

File with the content to put at the beginning of the SQL file. Example: It can be used to create the target table. |

NA |

NA |

|

|

File with the content to put at the end of the SQL file. Example: It can be used to create indexes. |

NA |

NA |

2.2. Type Handling

In a SQL insert statement, it is important to know the type of data to generate the right syntax. Strings and dates are delimited by single quotes ('), but numbers and booleans are not. CSVSource has mechanisms to detect data types, but it can also be unpredictable. Take a value that contains only numbers, but it isn’t necessarily numeric, like a social security number. CSVSource will automatically identify it as numeric, but in another row it finds "none", making it a string.

3. About this Documentation

This documentation is written in Asciidoc. We use Asciidoctor to compile it to HTML and PDF.

$ asciidoctor docs/index.adoc $ asciidoctor-pdf docs/index.adoc